26 comments

Ari, from my perspective we consistently utilize R for census data analysis to prove out correlation between performance trends for advertisers and demo/psycho/behavioral data. In terms of packages, it’s primarily done on an adhoc basis (downloaded for web, based off census search) rather than taken directly from a package.

My current workflow when I use Census data involves the tidycensus package, along with the tidyverse suite of packages like dplyr, purrr, and ggplot2. I typically want to know where people live and how they are distributed, and sometimes economic data like income.

Hi Ari – I am doing an analysis on factors related to poverty in specific census tracts. Associated information includes data on income, wealth and earnings, education, crime rates, housing, health and employment for individuals and households that are living at 2 and 3 times the poverty line. This helps us identify ways in which to help lift people out of poverty.

Ari, here’s a list of packages I’ve used in the recent past for my census related projects: acs, acsprofiles, dplyr, purrr, tidyverse,tigris, RSocrata, ggplot2, stringr, and leaflet. I also look at data presented on the censusreporter.org site as a guide. All of my projects are focused on understanding data for neighborhoods, so I’m looking at tract or smaller areas. The variables I’m looking at are similar to those in the social Vulnerability index https://svi.cdc.gov/ or those presented in the census reporter profiles https://censusreporter.org/profiles/14000US18097353600-census-tract-3536-marion-in/. I’m glad you are undertaking this because I’ve found the census website of limited use in the past.

I use tidycensus for obtaining the Census data, dplyr for manipulating it, and leaflet for mapping. My projects typically involve overlaying child welfare data (I perform analyses using government agencies’ administrative data) with Census data on socioeconomic characteristics known to be related to poverty and involvement with child protective services.

Looking forward to seeing the courses you put together!

Mike- I am interested in learning more about your analyses. What government data do you use and what socioeconomic characteristics known to be related to poverty? Thanks for anything you can share.

This is great, Ari! I can’t wait to see what you put together.

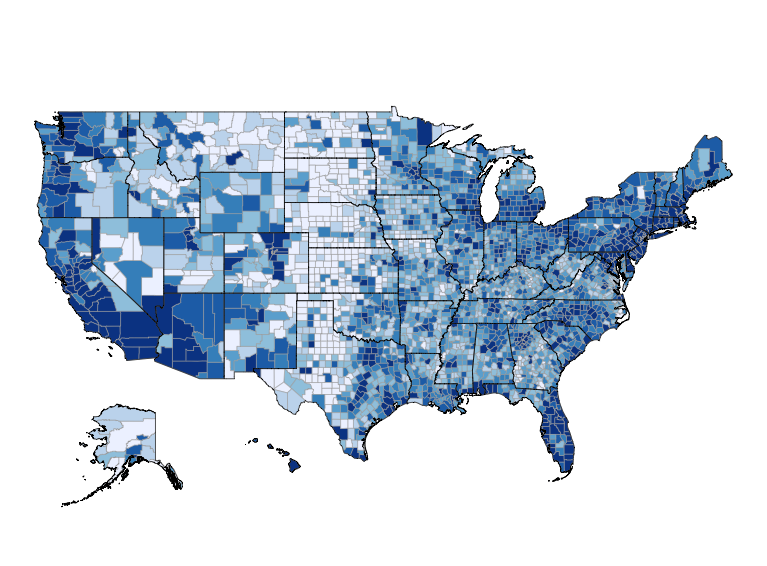

I’m biased but I use tidycensus and tigris for data acquisition; the tidyverse packages and sf for data processing; and tmap and ggplot2 for visualization. I also use data downloaded from NHGIS (http://www.nhgis.org) and IPUMS (http://www.ipums.org). For these sources, the new ripums package (https://cran.r-project.org/web/packages/ripums/index.html) looks promising. Recent projects have looked at tracking neighborhood shifts in racial composition; modeling migration trends of millennials and baby boomers; and building public-facing visualization tools (with Shiny and other platforms like Mapbox). I also use R a lot to quickly generate datasets for use in my teaching.

I think there’s something to be said for using a lower-level package like httr to work with the Census API directly, which is what I’ve done in my research and teaching so far (though tidycensus looks beautiful).

I’m most interested in tract-level data, and in geocoding addresses or coordinates within Census tracts using the Geocoding Services API (also with httr). Like the others above, I use tidyverse packages for all data processing and visualization, and both ggplot2 and leaflet for mapping.

It’s exciting to me as a sociology grad student doing computational work to see the Census invest in teaching data science!

I don’t use R much yet, but I work for an MPO and we try to identify underserved populations to evaluate transportation projects for disparate impacts in order to comply with Title VI of the Civil Rights Act. We generally look at no-vehicle households, poverty, race, age, limited English proficiency, and disability status at the block group or census tract level. I would be interested to see if there’s a way to standardize analysis for MPOs across the US.

Real estate investors would want to know the relative economic health of an area or zip code by looking at population growth, employment growth, income level and demographics.

I’ve not attempted many projects, but I’ve used the acs package to load data. I am attempting now to use mapping packages to prorate Census facts to political groupings of precincts via area.

This is fabulous! I wrote the censusr package which I mostly use for ACS data tables (transportation engineer). We’re moving our stuff over to tidycensus, which integrates nicely with Tigris, sf, and leaflet.

Hi Ari. I generally use the tidyverse in my work with the Census data. I have a custom script that downloads from the Census FTP site.

Once the data are downloaded, we not only track national-level trends for multiple measures, but we download the county-level data to aggregate the labor force and unemployed numbers to our specific boundaries to estimate the average unemployment. The unemployment data are also used in various models to determine its relationship to production, control for the economy when examining other market factors, and predictive analytics.

I have to tried to teach students sampling techniques with samplingbook package. Check it out.

I work at a think tank, and I use the censusapi package constantly, along with the tidyverse packages. Occasionally, I use tigris and ggplot to map my data.

As far as the analyses, my work focuses on demographic and economic variation across US metro areas, so I do a lot of comparisons over time and across places. Dealing with geography changes, ACS question changes, and similar comparability issues are some of my major challenges.

Ari – the value of choroplethr is already constrained by it’s focus on US census data. I work using many countries data and streamline stuff for the US market detracts from it’s utility everywhere else. Perhaps develop the courses and packages with a view to greater generalisability.

Ari, for me it looks like I use tidycensus, tidyverse, & stringr. I used them to look at Puerto Rico last year, very sad to think that data is not worth so much today. But can I put in a good word for transmute, tho?

Finding the right table, and finding out if it’s really populated at the level of geography you need might be good for a course.

Since we all have to know about the American Civil War now, I got the idea that it might be interesting to look at the locales of famous battles and other dislocations such as Sherman’s march to the sea, to see how they fared over time population wise. A longitudinal inventory of geographic data-containing units would aid in this, you could probably get a pretty good panel going back multiple censuses that would be interesting even if it didnt’ go back all the way. This isn’t a professional interest for me, I just thought I’d mention it with all the stats and geo brainiacs reading this, someone is bound to come up with something and do it.

Hi. I’ve been working with census tract level data from the decennial and the ACS for estimating rates of disease in small areas. There is an emphasis on small area analysis in public health in order to better target interventions to the most needed areas. I haven’t used any specific packing within R at this time. Changing census tract boundaries complicate our analyses when we try to investigate rate changes per area over time. We’re also attempting to identify the best method of generating/identifying single-year estimates for census tract population counts (again–we’re focusing on disease rate by census tract and disease rate change over time)

I’m new to R. I’ve mostly been using SAS in batch work and have recently shifted from SAS/Intrnet to PHP/MySQL for online display. I work with American Community Survey (ACS) data. So far I’ve used the “haven” package to read SAS data into R.

Small area estimations using ACS data as a way to augment existing survey data that might be aggregated at a higher-level. For example, survey estimates at the country level, but would like to estimate at the zip code tabulated area. Some methods I have used in the past included IPUF and model based approaches like GREGWT and ILNA.

I used the basic packages, readr, tidyr, dplyr, stringr, ggplot2. I was working on a small pro bono project here in Clark county IN.

I do however have a small demo project planned for early next year. I’m planning to analyze income, population, etc., using R within Tableau, prepare a dashboard or two, and package it all in a twbx.

I use R for prototyping a variety of analytic processes that will ultimately be run in SAS. I’m the author of a CRAN package (nhanesA) that simplifies data retrieval from the National Health and Nutrition Examination Survey (NHANES) website.

Most of the comments already cover what I might suggest. In particular I liked the comment emphasizing “lower level” approaches and data explanations.

I would emphasize that knowing what data is available at what geographical level is a difficult subject for people like myself not in your profession. I have probably spent a couple hundred hours discovering exactly what data is available at each level. This was not and cannot be explained by census customer service or nearly all census bureau contacts. Many of us wantabe users are not at the level of the professionals explaining the census data. I’m interested in block group level data. My perspective for my purposes is that everyone obsesses over MOE’s, which, while appropriate for true statistical applications, may not matter if you need to guide plans that require any kind of data or the work fails. I’ve learned to more carefully select data that I believe is reliable subjectively considering geographical level and MOE.

My point is that it would have saved me enormous effort to have some simplified way to see the data available by geographical level without getting into the seemingly mandatory 800 page documents from census about data and field characteristics. Of course, then, where the data is and in what file is next before using tidycensus to retrieve the data.

I don’t want someone to presume how to analyze data I want. I simply want to know what data is out there, where it is, and how to get it into a spreadsheet.

mitch albert, phd (amateur census statistical user)

I think some of the visual tools and techniques that you have provided and tough are great for understanding the data and what’s on the ground today.

Our group does transportation modeling (future forecasting), and for that we need to build off of current data (census information). We usually just use standard R packages and functions (nothing fancy) to re-tabulate and format census data into our “Traffic Analysis Zones” (TAZ). Most of our GIS processing and visualization occurs outside of R. R is used to do tapplys and similar to rebuild the census tables and data into our specific spatial boundaries.

For pulling ACS data, my colleagues and I use the acs package, with tidyverse packages to manipulate the tables. The stringr package, in particular, helps us work with table and column names.

The project I’m currently working on is examining the infant microbiome as a mechanism by which a neighborhood’s environmental quality and the accessibility of nutritious food influences neurological development and school readiness outcomes. We’re using ACS stats relating to neighborhood demographics and socioeconomic status as covarying factors (as opposed to pollution or nutrition, which will directly impact the microbiome).

I am using census data to analysis police shooting. I need a large amount data at block and block group level. It is painful to download these small area data from API-based packages so I am developing a package called totalcensus, which downloads summary files and extract sdata from summary files directly. The package is here https://github.com/GL-Li/totalcensus. I also use data.table, dplyr, ggplot2, ggmap2, and stringr.